Have you ever watched a relay race where the baton is dropped? Each runner might be fast, but if the handoff isn’t smooth, the whole team loses. In the world of software, it’s the same story — your modules and systems might perform flawlessly on their own, but when they need to work together, things can go awry.

That’s where system integration testing steps in, ensuring that every handoff, every interaction between systems, is smooth and glitch-free. Let’s unpack the best practices of system integration testing and everything you need to know to make it as sharp and reliable as a well-coordinated relay team.

What Is System Integration Testing?

System integration testing (SIT) is the process of verifying that different modules or systems within a software architecture work together as expected. It doesn’t just test whether the parts function independently but focuses on the interfaces and interactions between them.

For instance, IoT testing ensures that devices with different protocols and data formats can successfully exchange information and perform integrated functions.

Think of it this way: you’ve got different departments in a company. Individually, each department might be highly efficient, but they also need to collaborate. SIT ensures that when the accounting department sends an invoice to the sales team, it doesn’t end up in IT’s spam folder.

Effective SIT guarantees that IT integration efforts result in a cohesive system where data and functionality flow seamlessly across all components.

Types of Integration Testing

Let’s take a brief look at the different types of integration testing:

- Big Bang integration testing: This method throws everything into the mix at once — testing all modules together as a complete system.

- Incremental testing: Instead of testing all at once, incremental testing breaks the process into smaller, manageable parts, either integrating and testing modules one by one.

- Top-down testing: Start from the high-level modules and work your way down.

- Bottom-up testing: The reverse of top-down. You start with the nitty-gritty low-level modules and build up.

- Sandwich testing: Why choose when you can combine? This method mixes top-down and bottom-up approaches, integrating the upper and lower layers of the system simultaneously.

What Is the Purpose of System Integration Testing?

The ultimate goal of SIT is to ensure that all parts of the software ecosystem work cohesively. Whether it’s two systems communicating with each other or a complex web of interdependent modules, SIT identifies issues in communication, data flow, and overall integration.

The purpose can be broken down as follows:

- Detecting interface defects: Interfaces between systems often house the trickiest bugs. SIT helps detect errors at these integration points before they cause havoc.

- Ensuring functionality: Even if individual modules work in isolation, their combined functionality might fail due to unforeseen interactions. SIT ensures that the end-to-end process functions correctly.

- Verifying data consistency: When different systems communicate, the data exchanged must be consistent. SIT makes sure that the data passed between modules or systems is accurate and reliable.

- Improving reliability: Once integration issues are ironed out, the overall system becomes more stable, reducing the risk of breakdowns in production.

System Integration Testing Best Practices

To truly get the most of SIT, it’s not enough to just run the tests — you need a strategic approach.

Let’s dive into the best practices that will help ensure your systems work together like clockwork.

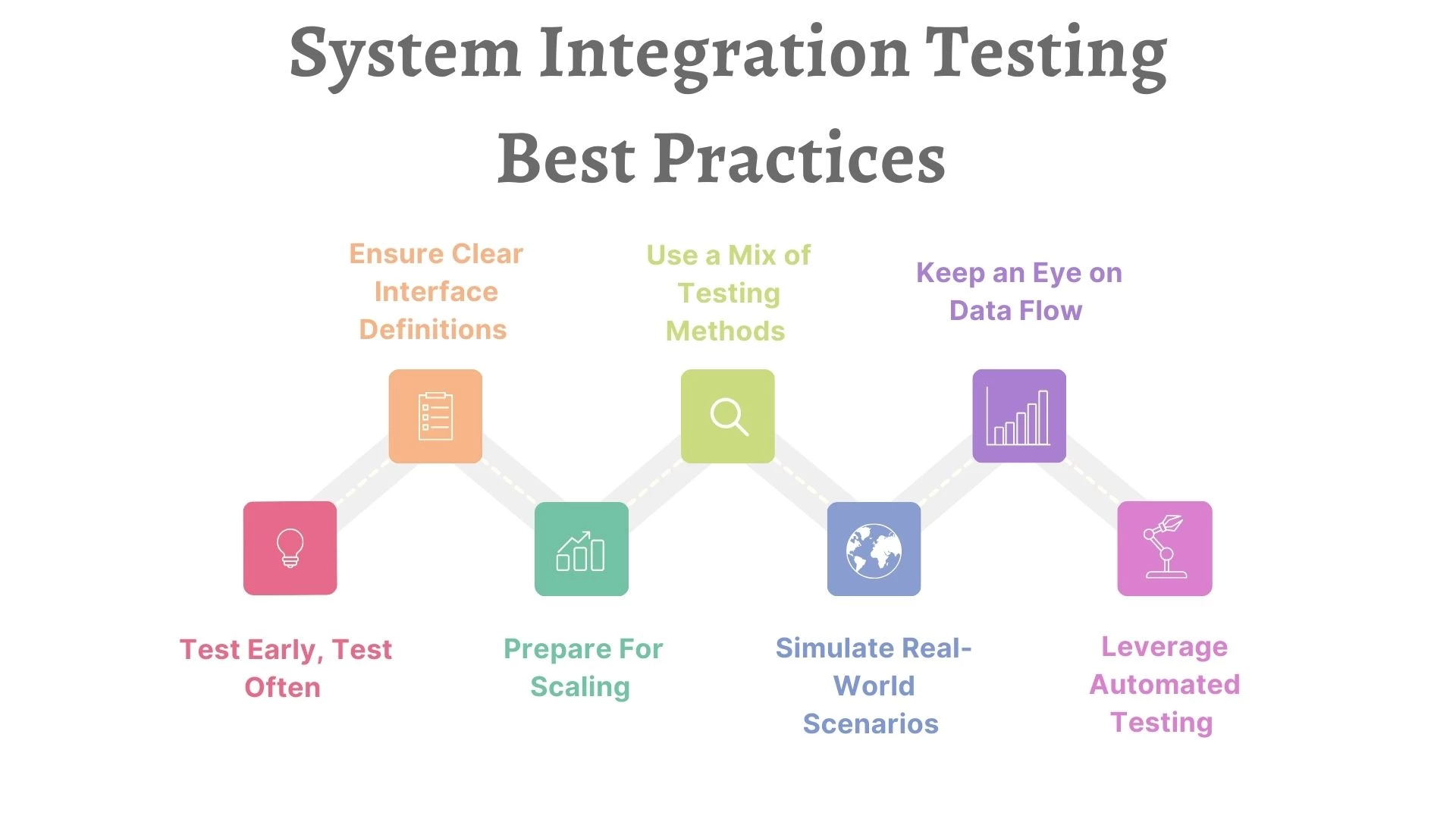

- Test early, test often

- Ensure clear interface definitions

- Prepare for scaling

- Use a mix of testing methods

- Simulate real-world scenarios

- Keep an eye on data flow

- Leverage automated testing

Test Early, Test Often

Don’t wait until all modules are fully developed to start testing. Incorporate SIT into your development cycle as soon as the first components are ready. By adopting a continuous integration approach, you can catch integration bugs early before they balloon into bigger problems.

Alejandro Córdoba Borja, CEO of Tres Astronautas, advocates for a test-first approach, explaining: “For each piece of functionality, tests are written first, which ensures that integration points are validated at every step. This approach catches integration errors early and keeps the system consistent.”

By testing early and continuously, you’re not just fixing problems — you’re avoiding them altogether.

Ensure Clear Interface Definitions

Miscommunication between modules often stems from poorly defined interfaces, much like a conversation where one person speaks in technical jargon while the other responds in slang — it’s a recipe for misunderstanding. To avoid such disconnects, it’s essential to establish clear, precise interface definitions for every module interaction.

When interfaces are vague or inconsistent, modules can misinterpret the data they receive, leading to “translation” errors that cascade throughout the system. A well-defined interface acts like a universal translator, ensuring that every module, no matter how complex or distinct, communicates with others in a consistent, predictable manner.

Prepare for Scaling

As your system grows, so does the complexity of your testing. A common pitfall is using outdated test scripts that no longer align with the current system. Regularly review, update, and optimize your test cases to ensure they keep up with the system’s evolution.

Pavel Klachkou, CTO of Routine Automation, highlights several key practices that help maintain testing efficiency at scale: “We are using Salesforce DevOps Center to run and execute automated tests, relying on Salesforce to execute tests and maintain code quality and structure to simplify the process. We use unified module names, where class naming reflects functionality and module belonging, correlating across systems.”

This organized structure ensures tests remain relevant and easily traceable as the system evolves.

He also emphasizes the importance of mocks and virtualization for focusing on specific internals of modules, and the power of automation: “Running tests automatically as part of pipelines ensures continuous validation.”

Klachkou further stresses the need to “keep it real,” meaning analyzing real data and producing masked test data with the same data patterns to ensure accurate results.

Use a Mix of Testing Methods

Big Bang testing might sound efficient — like solving all your integration woes in one grand sweep — but in reality, it can leave you scrambling to find where the issues lie, much like throwing all your ingredients into a pot without checking if they’ll blend well together. While it may seem quicker, identifying the root cause of problems becomes nearly impossible when everything is tested all at once.

Instead, opt for a balanced approach that combines various testing strategies such as top-down, bottom-up, and sandwich methods. This layered approach allows you to break down testing into points that need attention.

Simulate Real-World Scenarios

Don’t just test happy paths — where everything runs smoothly and according to plan. Real-world systems rarely operate in a bubble of perfection. To ensure your system is robust and resilient, you need to simulate real-world scenarios where things can and do go wrong. This means thinking beyond standard use cases and testing for edge cases that might seem unlikely but could disrupt your system.

Real-world testing can help validate that SIT contributes to a comprehensive and practical software testing approach.

For instance, what happens if there’s network latency? Will the system still process requests correctly, or will it choke on delayed responses? Consider scenarios where users input data in unexpected formats, introduce errors, or behave unpredictably — after all, real users don’t always follow the rules. Simulating these behaviors helps you identify weak points that could lead to crashes or errors.

Keep an Eye on Data Flow

One of the most common culprits behind integration issues is poor data communication between systems. It’s not just about ensuring that modules can talk to each other — it’s about making sure they’re having the right conversation. Miscommunication about data can lead to anything from minor glitches to full-blown system failures.

Start by clearly defining the data formats, protocols, and expected inputs and outputs for each module. Even a minor inconsistency in the data format — like a missing field or a mistyped value — can throw off the entire system, leading to errors that are hard to trace. Establish protocols for validating the data at each integration point, so you can catch these errors before they propagate through the system.

Leverage Automated Testing

Manual testing has its place, but when it comes to repetitive integration tests, automation is a game changer. By automating these routine tests, you not only save time but also drastically reduce the chances of human error. After all, no one wants to run the same test over and over manually, especially when systems scale, and the number of integration points increases.

Automation is particularly useful for regression testing — the process of ensuring that new updates or integrations don’t break existing functionality. Every time you introduce a new module or update an existing one, regression tests can be automatically triggered to verify that the rest of the system remains intact.

Best practices for SIT can also vary based on the software development methodology and number of systems in question.

Who Performs System Integration Testing?

Now that we understand the importance of SIT, you might wonder: Who’s responsible for all this heavy lifting?

SIT is a collaborative effort between several key players.

- QA engineers: These folks lead the charge. They design the test cases, run the scripts, and analyze the results to identify integration issues.

- Developers: While QA takes the wheel, developers play a crucial support role. They help by fixing integration bugs, writing unit tests for their code, and assisting in debugging complex issues.

- Business analysts: They ensure that the system’s functionality aligns with the business requirements. If there’s a mismatch between what’s being tested and what the client expects, BAs help steer the project back on course.

For companies with complex, multi-system infrastructures, or ones that don’t want their in-house team occupied, outsourcing the task to a system integrator agency can be invaluable. These agencies specialize in handling the complexities of integrating various software components and systems. They bring in dedicated testers and domain experts who ensure the system operates seamlessly.

System Integration Testing Best Practices: Bottom Line

System integration testing isn’t a task you can afford to overlook. It’s the glue that holds together the various pieces of your software puzzle, ensuring they fit seamlessly. Without it, you risk pushing faulty, incompatible systems into production, leading to frustration, downtime, and even financial losses.

Remember, the goal is for your systems to work together as smoothly as a well-coordinated team, not a disjointed group struggling to pass the baton.

Our team ranks agencies worldwide to help you find a qualified partner. Visit our Agency Directory for the Top IT Services Agencies as well as:

- Top IT Services Companies in Dallas

- Top System Integrator Companies

- Top Performance Testing Companies

- Top Software Testing Companies

Our design experts also recognize the most innovative design projects across the globe. Visit our Awards section for the best & latest.

System Integration Testing Best Practices FAQs

What’s the difference between system testing and system integration testing?

System testing focuses on testing the entire system as a whole, while system integration testing zeroes in on the interactions between individual modules or systems.

How often should system integration testing be done?

Ideally, SIT should be continuous throughout the development process, especially when new modules or changes are integrated into the system.