Web scraping APIs help you gather web data for pricing intelligence, market monitoring, and research without managing proxies, browsers, or anti-bot systems yourself.

In this guide, we compare the best providers and highlight where each one fits depending on your scraping workload.

Web Scraping APIs: Key Findings

- Zyte and ScrapingBee lead in reliability on protected sites, with Zyte reaching about 93% success in benchmark tests and ScrapingBee among the APIs able to unblock 80% of difficult targets.

- Bright Data and ScrapFly focus on enterprise-grade scraping, built to handle heavily protected websites and large-scale data pipelines.

- ScraperAPI, Scrape.do, and Scrapingdog prioritize speed and monitoring workloads, making them common choices for price tracking and competitor intelligence.

How To Choose the Most Reliable Web Scraping API?

Two things are driving buyer behavior right now:

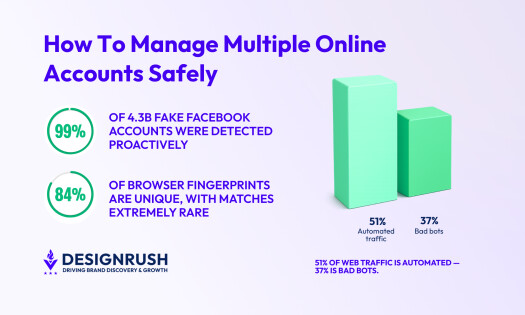

- More companies are buying scraping instead of building it. The web scraping market is estimated at $1.17B in 2026, up from $1.03B in 2025, and projected to keep growing through 2031.

- Websites are getting more hostile to automated access. In Q4 2025, TollBit data reported 1 in 50 website visits came from an AI scraper, up from 1 in 200 earlier in 2025, and a portion of bots bypassed robots.txt which is fueling more aggressive defenses.

Two reasons why you need to look out for operational fit more than mere features.

Here’s a list of criteria sourced from real users when deciding between providers:

- Target difficulty: If your core targets use heavy anti-bot, choose unblocking reliability first. If targets are mostly easy, choose cost predictability and throughput.

- Cost predictability: Prefer providers that make it easy to estimate cost per 1,000 successful pages for your mix of easy vs. protected targets.

- Retry behavior and failure mode: The best providers automatically retry and rotate infrastructure when a request fails instead of pushing the burden back to the user.

- Rendering strategy: Choose a provider that supports HTML-first with rendering only when needed, and gives you control to toggle it per target.

- Throughput and concurrency limits: Your provider needs to match your peak runs, like daily refresh, hourly monitoring, seasonal spikes, not just average volume.

Understanding these factors makes it easier to match the right provider to your workload no matter whether you need enterprise-grade infrastructure or a simple API for web scraping.

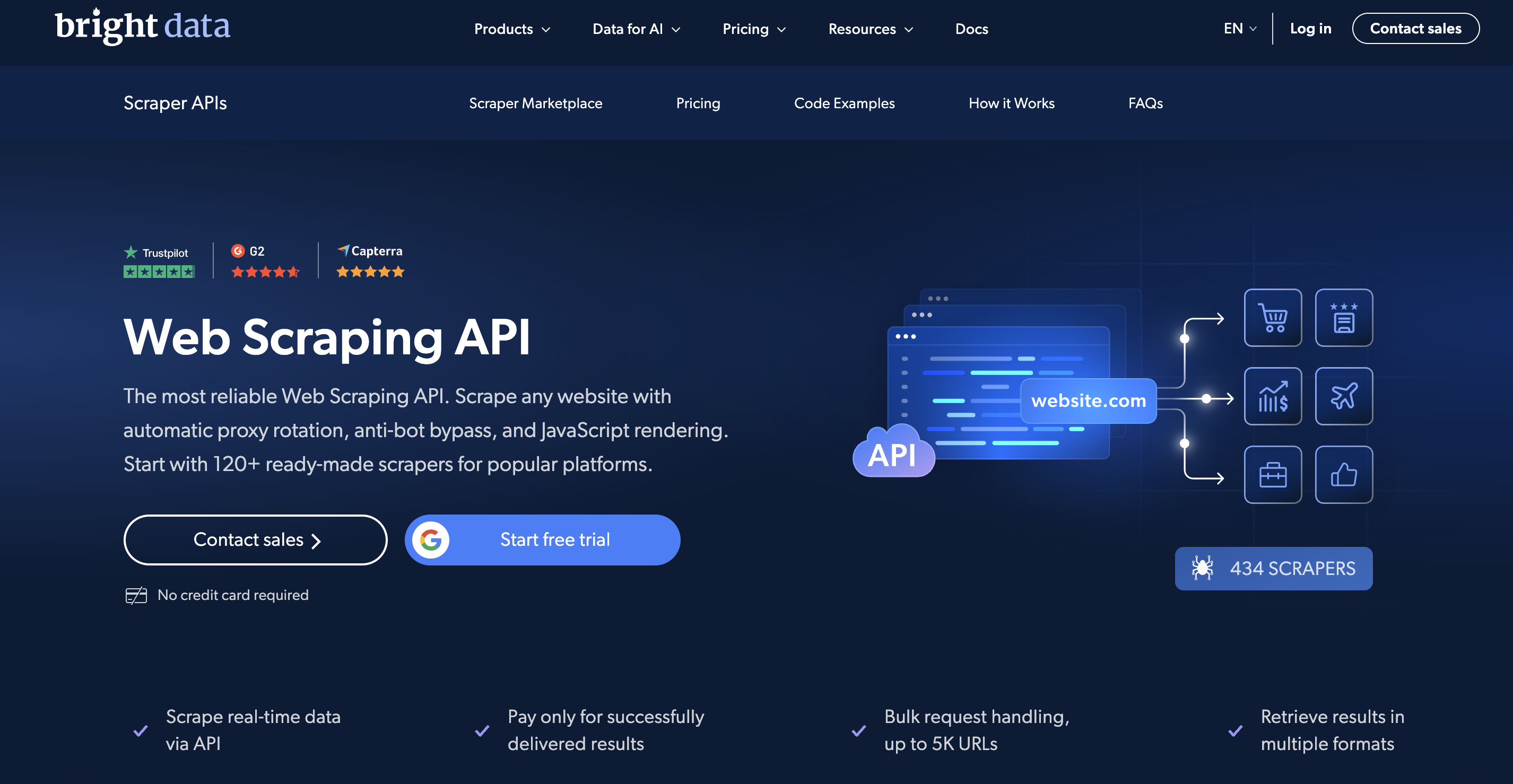

1. Bright Data: Best for Enterprise-Grade Scraping Infrastructure

Choose Bright Data if you need reliable scraping from difficult, high-protection websites at scale.

Bright Data is a high-reliability web scraping API built for companies that need consistent access to difficult, heavily protected websites. It operates a broader data platform that includes a Dataset Marketplace, SERP API, Browser API, and Web Archive, enabling end-to-end data acquisition.

It also handles IP rotation, anti-bot protection, and JavaScript rendering automatically, removing the need to maintain your own proxy or browser infrastructure.

Used by over 20,000 companies worldwide, from Fortune 500 enterprises to AI startups, Bright Data is designed for businesses running large-scale scraping operations where blocked requests translate into lost data, broken pipelines, or inaccurate market intelligence.

Key Features:

- High success rates on protected sites, often reported 80-95%+

- Access to 150M+ residential IPs across 195 countries

- 99.99% platform uptime backed by enterprise SLA

- 460+ ready-made scrapers for 100+ platforms delivering structured JSON/CSV without custom parsing

- Built-in proxy rotation and anti-bot handling

- JavaScript rendering for dynamic websites

- Geo-targeting by country and city-level routing

- Batch and asynchronous job processing

- Scalable infrastructure for large-volume scraping

Pricing:

- Free trial

- Pay as you go: $1.5/1K Records

- 510K Records: $0.98/1K Records

- 1M Records: $0.83/1K Records

- 2.5M Records: $0.75/1K Records

Pros:

- Strong reliability on difficult targets

- Handles complex anti-bot systems automatically

- Scales well for high-volume jobs

- Charges only for successful requests

Cons:

- Premium pricing compared to simpler APIs

- Credit-based pricing can be complex

- Overkill for scraping simple, static websites

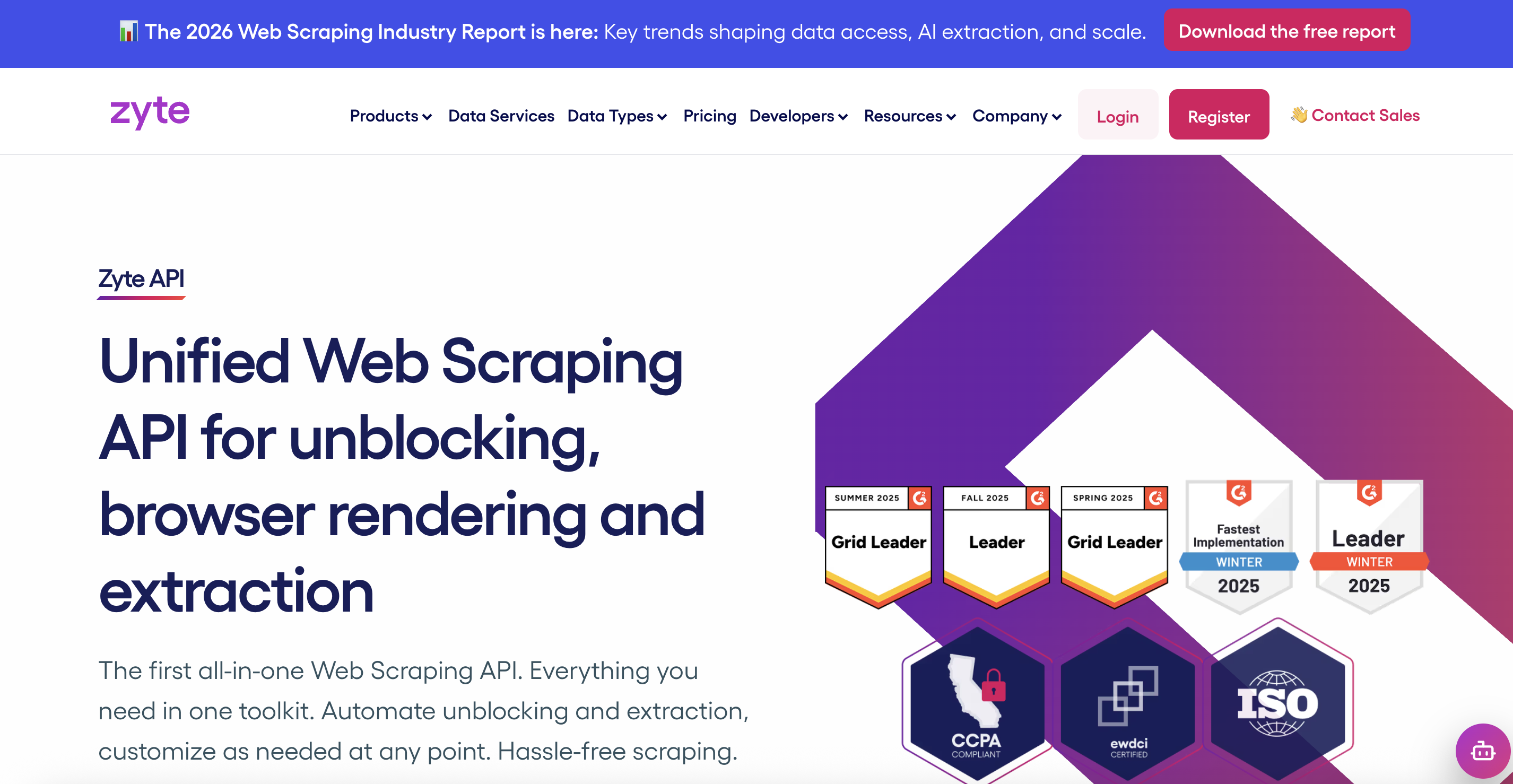

2. Zyte: Best for Long-Term Scraping of Complex Websites

Choose Zyte if you’re running sustained data operations that prioritize reliability over speed or lowest cost.

Zyte API is one of the older, more established scraping APIs on the market. It’s built by the team behind Scrapy and is designed to handle difficult websites through automatic ban detection, proxy management, and rendering.

In Proxyway’s 2025 Web Scraping API Report, Zyte ranked #1 overall for unblocking capability and throughput. It achieved a 93.14% success rate at 2 requests per second, delivered 15,422 results per hour and was identified as one of the fastest under real-world protected-site conditions.

Zyte fits multi-site monitoring, retail aggregation, and long-running data pipelines where predictable behavior across many targets matters more than raw speed.

Key Features:

- 93% success rate reflects real test conditions on protected sites

- 11.15 seconds average response time

- Adaptive pricing tiers automatically assigned per target website to optimize cost.

- Automatic ban and block handling that adjusts proxies and techniques per site.

- Built-in headless browser support for JavaScript-heavy pages.

- Optional structured data extraction capabilities

- Geolocation, cookie/session management, and scalable request handling.

Pricing:

- Free trial

- Pay as you go pricing starts from $0.13 per 1,000 responses

- Tier pricing starts from $0.10 per 1,000 responses

Pros:

- Industry benchmark leader for unblocking success and speed (Proxyway’s report)

- Can scale from small scrapes to enterprise workloads without custom proxy stacks

- Data extraction reduces manual parsing effort

Cons:

- Pricing complexity due to tier-based costs per target makes forecasting harder than flat pricing models.

- Requires some familiarity to fine-tune for best performance on certain targets.

3. WebScrapingAPI: Best for Plug-and-Play Web Data Extraction

Choose WebScrapingAPI if you need managed scraping and simple scaling.

WebScrapingAPI is a managed, API-first scraping service aimed at teams who want to collect website data without building and maintaining proxies and headless browsers themselves.

It’s positioned for businesses that need steady scraping for things like monitoring sites, pulling listings, or collecting competitive intel, especially when blocks and CAPTCHAs start showing up.

Key Features:

- High delivery reliability through automated proxy rotation and retry logic

- JavaScript rendering for modern dynamic websites

- Rotating residential and datacenter proxies included

- Geo targeting across 195 countries

- Concurrent request scaling based on plan tier

- Session control for consistent browsing behavior

- Screenshot capture support

Pricing:

- Free trial

- Starter: $19/month

- Basic: $49/month

- Standard: $99/month

Pros:

- Easy integration with a single API endpoint

- No need to manage proxies or browser infrastructure

- Scales through clear concurrency tiers

- Broad geographic targeting coverage

Cons:

- No publicly stated success rate benchmarks

- Pricing increases quickly with higher volumes

- May be unnecessary for very small or simple scraping needs

4. ScraperAPI: Best for Large-Scale Price and Competitor Monitoring

Choose ScraperAPI if you need scalable web data without building its own scraping infrastructure.

ScraperAPI is a managed web scraping API that lets you collect web data without building and maintaining proxy pools, browser infrastructure, and retry logic in-house.

In Proxyway’s benchmark, it was labeled a strong all-rounder. It performed well on mainstream targets like Amazon and Google, where most price monitoring happens, with benchmark averages of 93.30% (Amazon) and 94.78% (Google) across providers.

It’s a good fit when you need a single endpoint for ongoing data collection, and you want the vendor to handle most of the scraping plumbing, but you still want to validate performance on your exact targets.

Key Features:

- Success rate vendor claims as high as 99.9%

- Average response time claims shown as 1-3s

- JavaScript rendering supported

- Clear cost multipliers for tougher modes like JS rendering and premium routing

- Public status page with API health, incidents, and component tracking

Pricing:

- Free trial

- Hobby: $49/month

- Startup: $149/month

- Business: $299/month

- Scaling: $475/month

- Enterprise: Custom

@matas_valincius Web scraping is 100x easier, forget managing proxies and writing advanced scripts 😌 #webscraping#datascraping#coding#programming#javascript#proxies♬ die (sped up) - lucidbeatz & key kelly

Pros:

- Fast to integrate and easy to trial with published plan tiers

- Straightforward scaling through higher concurrency and credit tiers

- JS rendering is available across plans, so you can handle modern sites when needed

- Pricing logic is documented for credit consumption and heavier request modes

Cons:

- Credit pricing can be unpredictable for harder sites

- More expensive at higher usage levels

- Complex error handling may be required for tough pages

5. ScrapingBee: Best for Automatic Proxy and Browser Handling

Choose ScrapingBee if you need a reliable, out-of-the-box web data extraction without building infrastructure.

ScrapingBee is a managed web scraping API that lets your team collect data from websites without building your own proxy rotation, browser automation, or anti-bot bypass systems.

It handles headless browser rendering, rotating proxies, and CAPTCHA evasion so you can focus on extracting the data you need.

In Proxyway’s benchmark, ScrapingBee was listed among the four APIs that successfully opened protected targets more than 80% of the time, which is a strong indicator of real-world unblocking capability.

This makes it suitable for businesses that need reliable scraping of dynamic sites and want a simple API without maintaining infrastructure themselves.

Key Features:

- Handles proxy rotation and headless browser rendering automatically

- JavaScript rendering with scenario controls

- Supports screenshots and basic extraction rules

- Worldwide proxy pool to help reduce blocks

- Simple API with multi-language SDKs and examples

Pricing:

- Free trial

- Freelance: $49/month

- Startup: $99/month

- Business: $249/month

- Business+: $599/month

Pros:

- Very easy to integrate with clear API and docs

- Reliable scraping of dynamic and JavaScript-heavy sites

- Good support and customer service

- Free credits to start testing immediately

Cons:

- Credit costs can add up quickly with advanced features

- Less granular control for expert scrapers

- Pricing may feel high for very large volumes

- Not optimized for highly targeted enterprise workflows

6. Crawlbase: Best for Reliable Removal of Blocks and CAPTCHAs

Choose Crawlbase if you need scalable web scraping with built-in unblocking and proxy handling through a single API.

Crawlbase is a managed web scraping API built for teams that want reliable web data without running proxy pools or browser infrastructure.

You send a URL, Crawlbase handles unblocking work like retries and CAPTCHA friction, and you get back the page content.

It is a strong fit for business use cases where scraping needs to run continuously, and failures create reporting gaps.

Key Features:

- Average success rate shown as 99%

- Average API response time documented as 4 to 10 seconds

- CAPTCHA and anti-bot bypass mechanisms

- JavaScript rendering supported via a separate JavaScript token

- Country targeting available through a country parameter

- Multiple APIs: Crawling API, Scraper API, Screenshots API, Smart Proxy

Pricing: Check out Crawlbase’s calculator for more accurate info on pricing.

Pros:

- Easy to start and integrate with minimal setup

- Handles common blocking challenges

- Transparent pricing with free trial requests

- Useful SDKs and developer libraries available

Cons:

- Performance varies by website difficulty, so you still need target testing

- Pricing can vary by domain complexity, which adds forecasting work

7. ScrapFly: Best for Scraping Protected eCommerce and Marketplace Websites

Choose ScrapFly if you want reliable scraping with clear cost visibility, without moving into full enterprise contracts.

ScrapFly is a managed scraping API that sits between lightweight plug-and-play tools and enterprise-grade scraping platforms.

It handles proxies, anti-bot bypass, and JavaScript rendering automatically, but unlike some competitors, it exposes detailed request parameters and billing logic, so you understand exactly what you’re paying for.

It’s a strong fit for businesses running ongoing scraping operations that need reliability but also want cost visibility and granular request configuration.

Key Features:

- Reported success rates up to ~98% on protected websites

- Typical response times around 5 to 10 seconds on dynamic targets

- Automatic proxy rotation with residential and datacenter options

- JavaScript rendering for modern websites

- Session management for multi-step scraping

- Structured data extraction options

- Screenshot support

Pricing:

- Free trial

- Discovery: $30/month

- Pro: $100/month

- Startup: 250/month

- Enterprise: $500/month

- Custom tier

Pros:

- Strong reliability for eCommerce and marketplace scraping

- Transparent credit consumption model

- Good balance between automation and configuration

- Suitable for sustained data pipelines

Cons:

- Response times are slower than simple HTML fetch APIs

- Credit costs increase with residential proxies and rendering

- Requires testing against your specific targets

8. Scrape.do: Best for High-Frequency Competitor Tracking

Choose Scrape.do if you need very high reliability and speed when extracting web data from sites with strong anti-bot systems.

Scrape.do is built for teams that are tired of scraping breaking at scale. It focuses on making protected websites accessible without forcing you to manage proxy pools, browser automation, or constant retry logic.

The platform is designed for ongoing data operations like price tracking, marketplace monitoring, and competitive intelligence where interruptions aren’t acceptable. It’s straightforward to integrate and structured around a pay-for-success model.

Key Features:

- Reported success rate up to ~99% on protected sites

- Reported average response time under 5 seconds on many targets

- Automatic proxy rotation including residential, mobile, and datacenter IPs

- Advanced anti-bot bypass targeting systems like Cloudflare and DataDome

- JavaScript rendering support

- Geo-targeting capabilities

Pricing:

- Free trial

- Hobby: $29/month

- Pro: $99/month

- Business: $249/month

- Advanced: $699/month

Pros:

- Fast response times compared with many competitors

- Handles modern anti-bot defenses out of the box

- Transparent pricing based on successful calls

- Strong customer support and positive user ratings

Cons:

- Costs can increase for very high volumes

- Returns raw content that must be parsed

- Costs scale with volume and advanced proxy use

9. ZenRows: Best for Flexible, Mixed-Difficulty Scraping Workloads

Choose ZenRows if you want a single scraping subscription you can apply across API requests and browser-based scraping, with clear basic vs. protected usage limits.

ZenRows is a scraping API suite that’s positioned less like a single fetch endpoint and more like a toolkit you can spend one subscription across. The main product is its Universal Scraper API, but it also offers a Scraping Browser and residential proxies under the same plan.

Instead of complex credit multipliers, plans clearly separate basic results from protected results, which makes budgeting easier when scraping a mix of simple and bot-protected sites.

Additionally, it offers built-in response filtering options, such as link and email extraction, beyond just returning raw HTML.

Key Features:

- Success rate above 99% against Cloudflare and other anti-bots

- JavaScript rendering using a headless browser option

- CAPTCHA bypass positioning and product support

- WAF bypass positioning for protected sites

- JSON response mode for structured debugging and network insights

- SDK availability for easy integration

Pricing:

- Free trial

- Developer: $69/month

- Startup: $129/month

- Business: $299/month

Pros:

- Subscription can be used across multiple ZenRows products, not locked to one tool

- Clear included usage buckets for basic results and protected results per plan

- Strong benchmark positioning as an all-round option in Proxyway’s 2025 report context

- Built-in rendering and anti-bot features reduce infrastructure work

Cons:

- Vendor success-rate claims should be validated on your targets

- Concurrency limits can matter at higher throughput depending on plan

- Variable pricing models can balloon 100x at premium tiers

10. Scrapingdog: Best for Reliable Scraping of Major Commercial Platforms

Choose Scrapingdog if you want high-speed, high-success web data extraction at a low cost per request, especially for SERP or eCommerce data.

Scrapingdog is a scraping API that leans heavily into speed and cost efficiency, particularly for common commercial targets like Google Search and Amazon.

Unlike more enterprise-oriented platforms, it offers several purpose-built APIs for specific use cases, like Amazon reviews, which return structured data instead of just raw HTML.

Scrapingdog tends to appeal to teams that prioritize fast turnaround and lower per-request costs over advanced workflow tooling or enterprise feature depth.

Key Features:

- Vendor-reported 100 percent success rate across selected benchmark targets

- Vendor-reported average response times around 3 to 4 seconds in published comparisons

- Automatic proxy rotation handling IP bans and rate limits

- Headless browser rendering for JavaScript-heavy pages

- Built-in CAPTCHA retry handling

- Dedicated APIs for Google Search, Amazon product data, and Amazon reviews

- Provides structured JSON responses for its dedicated, platform-specific APIs

Pricing:

- Free trial

- Lite: $40/month

- Standard: $90/month

- Pro: $200/month

- Premium: $350/month

Pros:

- High reported success rates on common commercial targets

- Dedicated structured APIs reduce parsing work for SERP and Amazon

- Lower entry pricing compared to enterprise-tier tools

- Simple REST integration

Cons:

- Performance figures are vendor-published and not third-party benchmark verified

- Documentation depth is lighter than larger platforms

- Costs increase with higher volumes and advanced usage

- Requires your own parsing for general scraping use cases

Comparing The Best Web Scraping API Providers

Factors like success rate on protected websites, response speed, and pricing predictability ultimately determine whether a scraping pipeline runs smoothly or constantly requires maintenance.

The table below compares the providers in this list based on those practical metrics to help narrow down which tools are better suited for different workloads.

| Provider | Best for | Typicall Success Rate* | Average Response Time* | Pricing Predictability |

| Bright Data | Enterprise-grade scraping infrastructure | 80-95%+ reported on protected sites | 5-10s typical enterprise scraping latency | Medium |

| Zyte | Long-term scraping of complex websites | 93-94% benchmark success | 10-11s average | Medium |

| WebScrapingAPI | Plug-and-play web data extraction | Not publicly benchmarked | 3-10s typical API response | High |

| ScraperAPI | Price and competitor monitoring | 92.7% average reliability | 15.7s average in testing | Medium |

| ScrapingBee | Automatic proxy and browser handling | 84% on difficult targets; up to 100% on some sites | 5-7s typical | Medium |

| Crawlbase | Removing blocks and CAPTCHAs | 99% vendor-reported | 4-10s | Medium |

| ScrapFly | Scraping protected eCommerce websites | 98% success on protected sites | 5-10s typical | Medium |

| Scrape.do | High-frequency competitor tracking | 98% vendor-reported | 4.7s average | High |

| ZenRows | Mixed-difficulty scraping workloads | 90% on some targets | 18s on complex pages | Medium |

| Scrapingdog | Commercial platforms | Up to 100% on some test targets | 3-4s average in tests | High |

*Performance metrics vary by target website, protection level, and request configuration.

While performance benchmarks and pricing models provide a helpful starting point, the best web scraping API ultimately depends on your specific targets, request volume, and data refresh frequency.

Reviewing the strengths of each provider in more detail can help you determine which option aligns best with your business use case.

Our team ranks agencies worldwide to help you find a qualified partner to implement the latest IT solutions. Visit our Agency Directory for the Top IT Services Companies, as well as:

- Top IT Consulting Companies

- Top IT Outsourcing Companies

- Top IT Services for Startups

- Top IT Services for Financial Industry

- Top IT Services Companies in Dallas

Web Scraping API FAQs

1. What is a web scraping API?

A web scraping API is a service that automatically collects data from websites and returns it in a structured format such as HTML or JSON. Instead of building your own scraping infrastructure, the API handles technical challenges like proxy rotation, JavaScript rendering, and anti-bot defenses so you can focus on extracting the data you need.

2. Why use a web scraping API instead of building your own scraper?

Building a scraper in-house requires managing proxies, browser automation, retries, and anti-bot systems. A web scraping API abstracts that complexity by providing a single endpoint that retrieves web pages reliably at scale, which reduces engineering effort and maintenance.

3. What should businesses look for when choosing a web scraping API?

Key factors include success rate on protected websites, response speed, pricing model, scalability, and how well the API handles challenges like CAPTCHAs or dynamic content. Testing providers against your specific target websites is usually the most reliable way to compare them.

4. Are web scraping APIs legal to use?

Web scraping itself is generally legal when collecting publicly available data, but usage must comply with website terms of service, copyright laws, and applicable data regulations. Businesses should review legal requirements and ensure scraping activities follow ethical and regulatory guidelines.

5. How much do web scraping APIs typically cost?

Pricing varies depending on the provider and the complexity of the websites being scraped. Most APIs charge either per request, per successful response, or through monthly subscription tiers. Costs usually increase when scraping dynamic or heavily protected websites that require proxies or browser rendering.